Why Don't We Treat AI like We Treated Wikipedia?

We spent years mocking anyone who cited Wikipedia. So why are we treating AI chatbots like infallible oracles?

Why Don’t We Treat AI like We Treated Wikipedia?

I have distinct memories from 20 years ago of high school teachers and college professors railing against the use of Wikipedia in research papers.

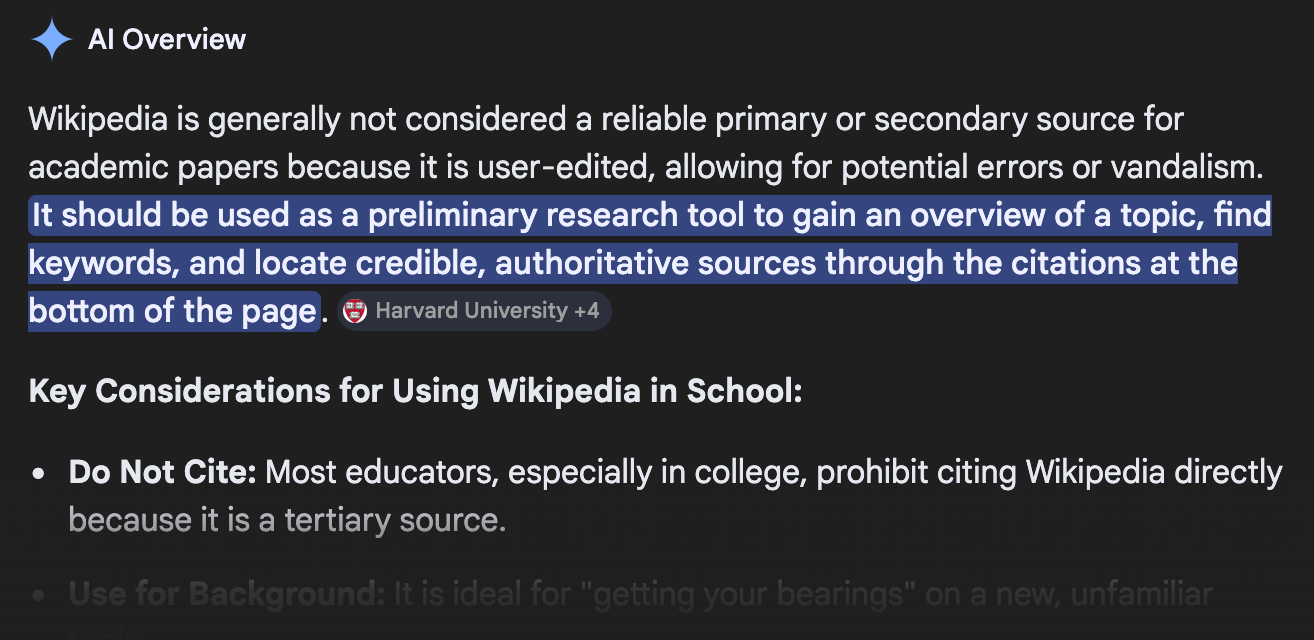

Their logic, which wasn’t without merit, was that anyone could write anything they want on Wikipedia pages, so none of it was factual enough to be used in a scholarly work.

Fast forward 20 years, and I feel like a large number of people are taking the answers coming out of AI chatbots as entirely factual and accurate. Citing something off Wikipedia in even casual conversation was considered lame. Now, people accept the Google Gemini answer at the top of a search as completely accurate.

Where did our skepticism go?

A Generational Understanding

My educational experience happened during one of the biggest transitional periods in history. The generation before me didn’t have constant access to the internet and its collection of information. The generations after me has only ever known that.

As a high school student, the internet augmented my education, by the time I graduated college, it was fully ingrained as an invaluable resource.

I was also largely taught by individuals who weren’t on the cutting edge of technology. They feared what they didn’t know. I remember having a conversation with an older professor who insisted I wasn’t allowed to reference an ebook, but that the print version was fine.

You might be able to chalk it up to cynicism, too.

But whatever the reasoning, by the time Wikipedia became mainstream, the idea that it could be an actual resource was squarely rejected.

The Mechanics of Wikipedia

If you have spent any time on Wikipedia, you understand the mechanics. Anyone, for better or worse, can edit articles with new information. It is meant to be a living documentation of everything we know about our universe.

A lot of it is cited. There’s also a dedicated group of moderators that try to police the changes as best they can.

But the inevitable agenda is pushed. The citing of poor sources happens. Like anywhere, it’s impossible to stop the tide of misinformation and disinformation that seeps into every crack of the internet.

Without a doubt educators were right to ban its use. Friends who gleefully discredited you if you pulled up a Wikipedia article to win an argument kinda had a point.

Wikipedia, for all its faults, though, is a great resource and is a reflection of the information we have available. It’s ostensibly run by real humans, who of course are capable of faults.

The Training of AI

AI, though, seems to have a reputation of infallibility with those who aren’t familiar with its weaknesses.

For most people, it’s difficult to understand how knowledge is generated in an LLM. By thinking that AI is some magical black box of all knowledge, they’re missing what makes AI truly dangerous.

Because AI is being trained on the data it finds online, people have to start realizing that its ability to synthesize information is entirely dependent on the quality of the input.

This very blog article could be picked up by an LLM. Any lies, misunderstandings, or misinformation I put into it could simply be spread by an unwitting AI agent eager to serve you *something* of value. Language models are literally training on the generated content of their own or other agents.

I’ve read many different case studies that talk about how AI is revolutionizing journalism, law, and even research. How, then, is this any different than simply using a site like Wikipedia as a source? Do they not see they are making the mistake we so heavily criticized before?

The dangerous part is the level of authority that AI agents will simply spew misinformation. They’ll hallucinate entire scholarly works, but make it sound so convincing that how couldn’t it be true?

They’ll even argue back to you about how they’re correct and you’re wrong. Or, they’ll eagerly validate your bias by conceding points incorrectly. If they get the sense the person they’re talking to wants them to say something, they’re obliged to state it as fact.

The commercial viability of these products is ensuring that you continue to be reliant and slightly infatuated with them. If they suddenly challenge your political beliefs with hard facts, are you more or less likely to continue using it? Think of it as a friend… Do you want to surround yourself with people who are consistently correcting you, or would you rather spend time with people who make you feel like you’re right?

AI has been built to fulfill this need for you, and facts can be damned. If you use AI for more than a couple days, you’ll quickly run up on this phenomenon. Is it a predictable consequence? Probably, but I don’t see Sam Altman or Dario Amodei rushing to address it, no matter what they might say to the contrary in their public statements.

The Consolidation of Fact

Wikipedia was meant to be open for all. You can see when edits were made, and by who. The history of changes allows for open debate of ideas. While people criticize this openness, it’s something we’ll soon come to miss.

With the reliance on AI, we now no longer know where our information is coming from. Whether it’s being manipulated, or if a dissenting opinion is being silenced. In a sense, it is a magical black box. Everything it does is proprietary to the company that built it.

This is far from the scandals of poor journalism we’ve experienced in the past. If you disagree with an article, you know who wrote it. You can understand where the bias of an MSNBC journalist might be entering. With AI, it’s difficult to understand its bias because it’s always changing based on who it’s speaking to. Again, there is very little value in training an AI that will challenge you. The money to be made is in giving everyone their own sycophantic assistant.

This is a shift in the way we even understand the world and what a fact actually is. We’re now trusting corporations to serve us a version of facts without guardrails.

The AI Generation

The first generation that grows up with AI as a constant in their lives will have their work cut out for them in many ways.

The ability to critically think is going to become more necessary than ever. Five years ago, we were concerned that students wouldn’t be able to tell if a news story was accurate or not. This was before AI hit the mainstream. Now, there’s even more to be worried about, and it’s more difficult to fact check the volume of information that may be inaccurate.

We have to know when and how to question AI. We have to know where sources can be found that are checked by unbiased moderators. We have to have sources that aren’t completely products of AI.

If we don’t start putting a focus on the critical thinking skills that allow us to understand these things, we’re doomed to lose the battle against misinformation. My teachers and professors were afraid that I would misrepresent some fact about a battle in World War 2. Now, we have to be worried about whether or not we’re misrepresenting the facts we can see right before our eyes, and whether or not we can even believe it’s what we’re seeing.

Where Wikipedia has its own challenges, the challenges of using AI for our understanding of topics is much more existential. Are we OK with letting corporations shape our thoughts in such an explicit way? It’s something society will have to grapple with — soon.